Our everyday interactions are increasingly mediated by technology, be they mobile phones, chat systems, or social networking sites. These systems are designed to anticipate and support our needs and desires while facilitating those interactions. As these systems grow in complexity, or intelligence, how does that intelligence change what passes through them? Further, how does that intelligence evolve to make its own work for its own needs?

This last question served as the launching point for my interactive robotic painting machine. Does an art-making machine of my design make work for me or for itself? How does machine vision differ from human vision, and is that difference visible in its output? Is my own consciousness reinforced by the system or does it become lost within? In other words, is this machine alive, with agency as yet another piece of the technium, or is it our own anthropomorphization of the system that makes us think about it in these ways?

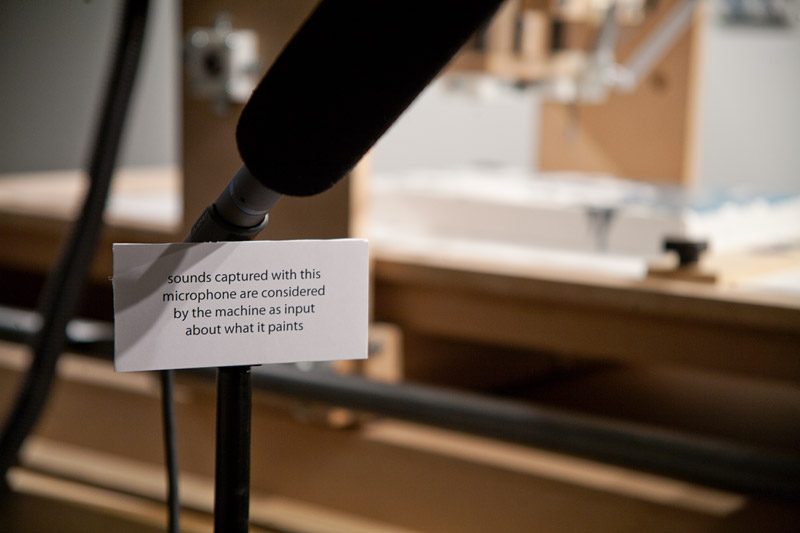

What I’ve built to consider these questions is an interactive robotic painting machine that uses artificial intelligence to paint its own body of work and to make its own decisions. While doing so, it listens to its environment and considers what it hears as input into the painting process. In the absence of someone or something else making sound in its presence, the machine, like many artists, listens to itself. But when it does hear others, it changes what it does just as we subtly (or not so subtly) are influenced by what others tell us.

Machine in an Exhibition Context

Because of its interactive nature, the machine can function in multiple contexts. In an exhibition or gallery context, the system listens to whatever sounds come its way. This could include listening to visitors speaking directly into the mic, eavesdropping on nearby conversations, or when nobody is there, listening to itself make a painting. In this video, the machine only had itself to listen to.

Lately I’ve taken to critiquing the machine as it paints, giving it audio input that is a direct response to what it just did. I’ll tell it what I think of each gesture it paints: if I liked it or didn’t, if I think it should have done something different, or how I see the latest mark fitting into the overall composition of the work. I’ve found that I tend to dislike these paintings more than others it makes, suggesting that listening to a constant critique of one’s creative process may not be productive.

Head Swap: Collaborative Work for Violin and Painting Machine

Head Swap (2011) is a work for amplified violin and interactive robotic painting machine that mixes music, art, technology, and robotics into one multidisciplinary performance. Instead of listening to the remarks or sounds made by visitors in a gallery, here the machine collaborates with violinist Benjamin Sung as he plays music composed by Zack Browning. Sung watches what the machine paints, using what he sees as guidance through the score. The machine listens to what Sung plays, using what it hears to help evaluate what it paints. Throughout, the machine also functions as a musical instrument itself, feeding pitched chords back into the work.

Head Swap

multidisciplinary performance work for amplified violin and interactive robotic painting machine

2011

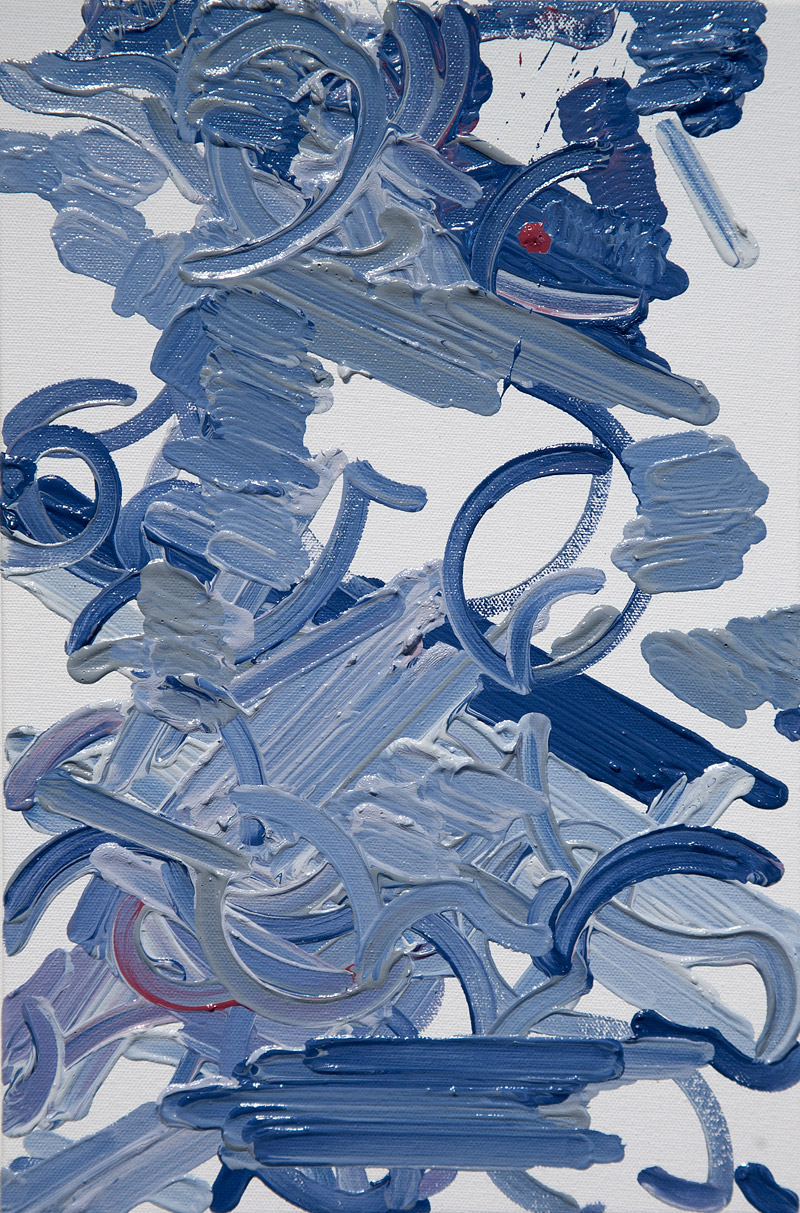

The result is a collaborative performance driven by back-and-forth interactions where the music affects the painting (and the sounds produced by the machine) while the painting affects the music. Because of differences in timing and performance, each painting made from one of these interactions is different (see below).

Painting Produced During Head Swap, 2011

oil on canvas, 10"x10"

Image Credit: Interactive Robotic Painting Machine

Painting Produced During Head Swap, 2011

oil on canvas, 10"x10"

Image Credit: Interactive Robotic Painting Machine

Head Swap was first performed at the Krannert Center for the Performing Arts on April 26, 2011. The piece was partially funded by a grant from the University of Illinois’ Campus Research Board.

How It Works

The system is built from a complex mix of hardware and software components, all networked together and managed from a central control system. This central software utilizes a genetic algorithm (GA) as its decision engine, making choices about what it paints and how it paints it. Audio captured by its shotgun microphone is subject to real-time fast fourier analysis, providing the system with useful data about what it hears. The resulting painting gestures are transformed into codes that can be sent to the cartesian robot that manipulates a paint brush in three dimensions. These codes break down each gesture into a series of primitive moves, describing everything from how much pressure to use on a brush stroke to how to put more paint on the brush.

Three separate but networked computers manage the system. The first runs the central control software, custom written in Python. Using the GA, this software begins each painting with a random collection of individual painting gestures, and proceeds to paint them. As it paints, it listens to its environment, considering what it hears as input into the gesture it just made. These sounds, along with its own biases, are considered at the end of each generation of gestures and used to produce a new set of gestures from the old. Thus a single painting is a rendering of many generations on a single canvas that illustrate a path towards an evolutionarily desirable (i.e. most fit) result. A second computer manages the brush camera, its projection, and also performs the audio analysis, sending that data to the central machine. A third computer acts as the low-level manipulator of the robot, accepting move commands from the central system and using those to drive stepper motors that move the robot in real-time.

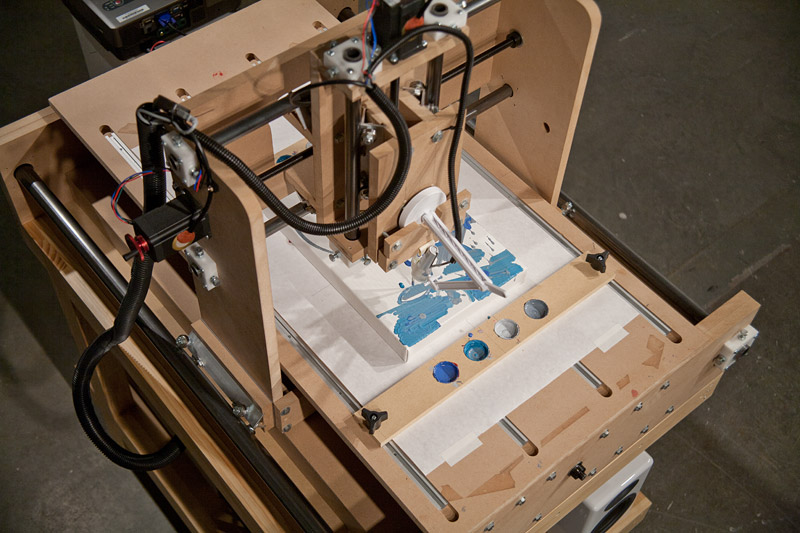

The robot itself was built by adapting an open source CNC design. In addition to fabricating and assembling each piece from scratch, I made significant custom modifications to the linear drive systems in order to facilitate fast rapids while maintaining repeatability. Luckily, because of the largeness of a paintbrush head, my accuracy requirements are less than a typical CNC design. This allowed me to design the drive system to be especially fast while using relatively low-cost and low-power motors.

It is important to understand that what the machine paints is not a direct mapping of what it hears. Instead, the system is making its own decisions about what it does while being influenceable by others. To understand this, I suggest you consider the machine an artist in its own right. Just as a human artist is influenced by what they hear (an influence that is sometimes easy to see and other times not so easy), the machine is influenced by what it hears. What it makes will be different in the absence of input, but it is not easy to trace how any input manifests as change.

Paintings

Below is a selection of works made by the machine. Some of these were made while it listened to itself, others while I critiqued its performance in real-time.

Photographs

To give you a close up view of the system, here are a few photographs of the machine itself. Click on any image to see a larger version, or to cycle through the large images.

Z Axis Motor