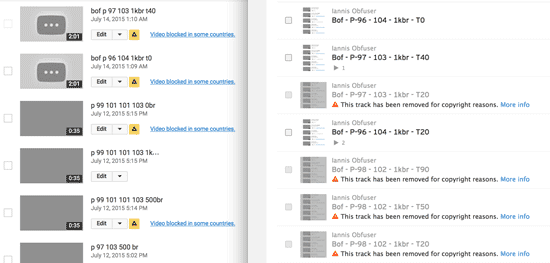

At the behest of corporate copyright holders, media sharing sites like YouTube and SoundCloud have implemented listening algorithms designed to identify uploaded music. However, these “Content ID” systems are designed to presume all use is illegal use; every match is automatically flagged, monetized, and/or muted and removed. Music Obfuscator makes audible what music sounds like when manipulated enough to hide from its origin from Content ID. Each audio track the work alters is algorithmically processed using a custom signal processing techniques. The degree of alteration is adjustable to accommodate changes in detection systems over time. How drastic do the audio changes need to be to evade detection? What kind of an aural world can exist on the edges of computational listening? Music Obfuscator helps us answer these questions.

The above video presents a selection of example tracks from a variety of music genres that were manipulated using the signal processing backend I developed for the work. Whereas the original music tracks these examples are based on can be easily and quickly detected by content ID algorithms on sites like YouTube, Soundcloud, etc, these tracks—after processing by Music Obfuscator—cannot be detected. Further, music tagging apps like Shazam also fail to understand them. All this despite the fact that humans who are familiar with them typically have no problem identifying their origins with a few seconds. What can humans hear that content ID algorithms cannot?

[Note in April 2021: Due to continued development of Content ID algorithms since Music Obfuscator was last worked on in 2016, some of these tracks—in the video version available above—are now identifiable on one or more media sites (though Shazam still fails to detect any of them). I expect parameter adjustment of the Music Obfuscator software would allow them to once again evade those detectors; when I next get a chance to try I’ll post an update here.]

Track Index

Below is a listing of video time locations for each of the listed tracks. If you view this video on its page at Vimeo, you can click the track index numbers there to automatically jump from track to track. If you want to do that above, you’ll have to use the play-head to manually seek the below times:

00:12 – Smells Like Teen Spirit

05:19 – Get Lucky

11:35 – Giant Steps

16:30 – Stairway to Heaven

24:38 – Headlines

28:41 – Blue in Green

34:26 – You’re Gonna Leave

39:11 – Blue Ocean Floor

Exhibitions and Presentations

- Piksel Festival, Bergen, Norway, 2015

- Wordhack, Babycastles Gallery, New York, 2016

Project Scope / Future Work

Originally I planned to finish this project by integrating the signal processing backend into a website where anyone could submit audio and get an obfuscated version back. Due to a variety of circumstances, this part of the project was never completed. I still hope to finish the project—but it’s been long enough that I’m not sure when that will be. Therefore I’ve decided to reveal this unfinished work to the public so its existence is more widely available.

Music Obfuscator Logo Image, 2015

(click for high-res version)

Acknowledgements

My sincere thanks to Rhizome for their funding of this project through the Net Art Microgrant program!